We are delighted to announce that our paper “MuSLR: Multimodal Symbolic Logical Reasoning” has been awarded the Best Paper Award at the AAAI 2026 Bridge Program on Logical and Symbolic Reasoning in Language Models, held in Singapore.

About the Paper

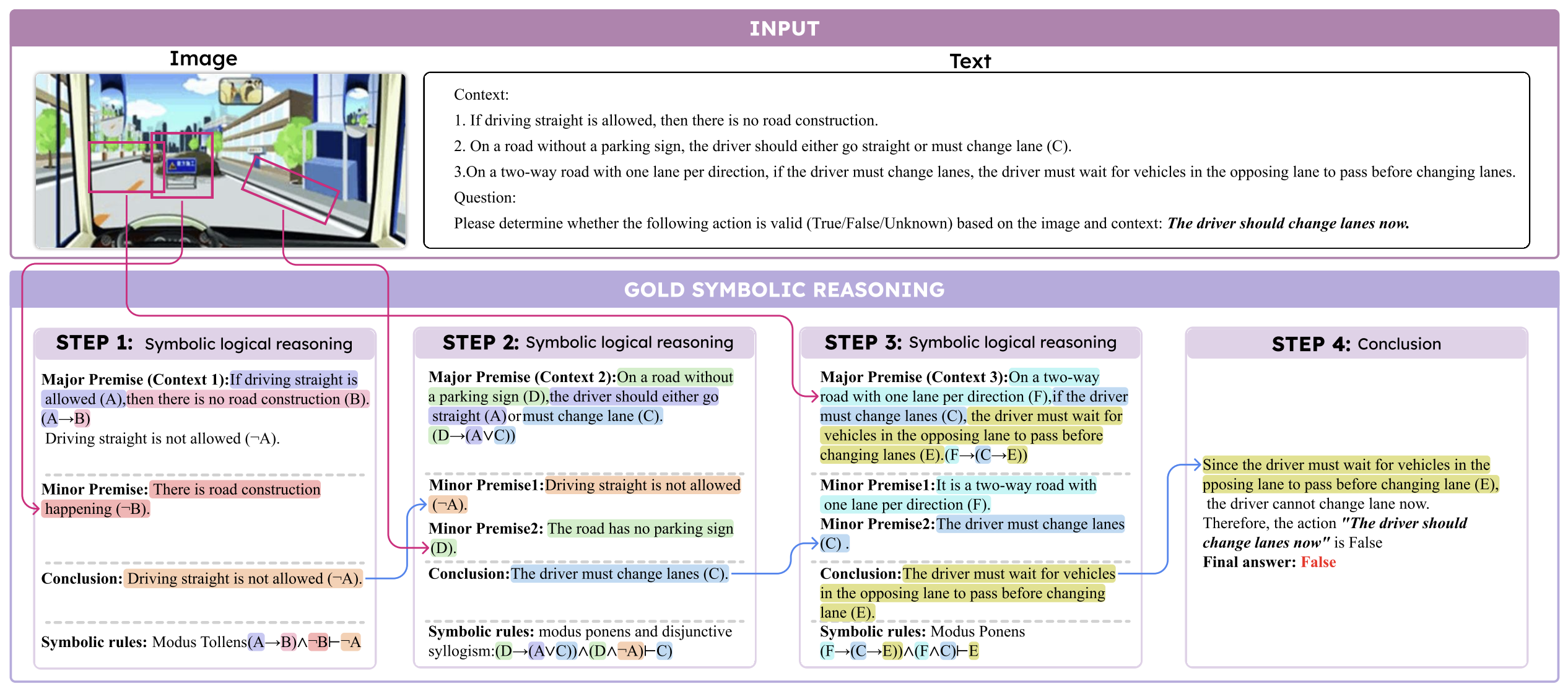

Multimodal symbolic logical reasoning, which aims to deduce new facts from multimodal input via formal logic, is critical in high-stakes applications such as autonomous driving and medical diagnosis where rigorous, deterministic reasoning helps prevent serious consequences. To evaluate such capabilities in current state-of-the-art vision-language models (VLMs), we introduce MuSLR, the first benchmark for multimodal symbolic logical reasoning grounded in formal logical rules.

Evaluating top VLMs revealed that they struggle substantially with multimodal symbolic logic inference. To address this, we propose LogiCAM, a modular framework that systematically applies formal logical rules to multimodal inputs through a Chain-of-Thought (CoT) mechanism. This approach decomposes complex reasoning into trackable modules, enabling rigorous and systematic deduction, and delivering significant performance gains on complex logics.

About the Workshop

The AAAI 2026 Bridge Program on Logical and Symbolic Reasoning in Language Models aims to thoroughly explore and expand the intersection of AI and Logic. The workshop serves as a premier platform for systematic discussion about new applications of various logical methods in AI, with a special focus on enhancing the logical and symbolic reasoning abilities of Large Language Models (LLMs) and integrating neural networks with symbolic methods.

📅 Awarded on: March 02, 2026

📍 Conference: Logical and Symbolic Reasoning in Language Models Bridge Program @ AAAI 2026

✍️ Authors: Jundong Xu, Hao Fei, Yuhui Zhang, Liangming Pan, Qijun Huang, Qian Liu, Preslav Nakov, Min-Yen Kan, William Yang Wang, Mong-Li Lee, Wynne Hsu